PROCIP: our internal tool for safer and faster technology selection

Choosing the right reactor technology as early as possible is one of the most powerful levers for ensuring the success of a chemical process, particularly...

Read more

Interview

Journal

Step into the daily lives of the Processium teams through a series of interviews that give voice to those who steer our projects every day. A deep dive into the know-how, journeys, and passion that drive our company forward.

From her early days studying chemical engineering in Lisbon to the publication of work acclaimed by the international scientific community, PhD Rita Ferreira Alves has always followed a common thread: understanding, modeling, and predicting the behavior of complex processes. From custom modeling to technology selection tools and AI, her work demonstrates a rigor and vision that resonate strongly with Processium’s DNA. Today, she shares her perspective on the digital age in industrial process development and the growing role of modeling in this transformation.

I began studying chemical engineering at the Instituto Superior Técnico (University of Lisbon) in 2009. From the very first year, I realized that it wasn’t the experimental work in laboratories that fascinated me the most, but rather the analysis and processing of the resulting data. That was really the beginning of my passion for modeling, and that same year, I signed up for a Matlab course, in addition to Fortran lessons (a programming language that was very popular at the time).

For my second-year master’s internship, I came to France to work at the CP2M laboratory. The subject of my internship – modeling a reactor for ethylene polymerization – became the subject of my thesis, during which I worked on a multiscale model of a fluidized bed reactor for the production of polyethylene in the gas phase. It was a very complicated model to code. It took me four years to develop since there was no existing basis for the work. We finally published four articles based on this thesis, which were very well received by the international scientific community.

After completing my thesis in 2021, I joined Processium as a process engineer. I was fortunate to join a company with strong potential in modeling, at the heart of an environment dedicated to R&D. Very quickly, the first advanced modeling projects began to take shape. We created several working groups focused on Matlab and the development of models for chemical reactors, before extending these ideas to other areas such as adsorption and fermentation.

In 2023, I took over as head of a small “Digital Skills” team, which now consists of four engineers, a work-study student, and myself as project manager. Together, we have broadened our field of expertise beyond Matlab, working in an increasingly collaborative manner with the rest of the Processium teams. We have also integrated AI skills into our team.

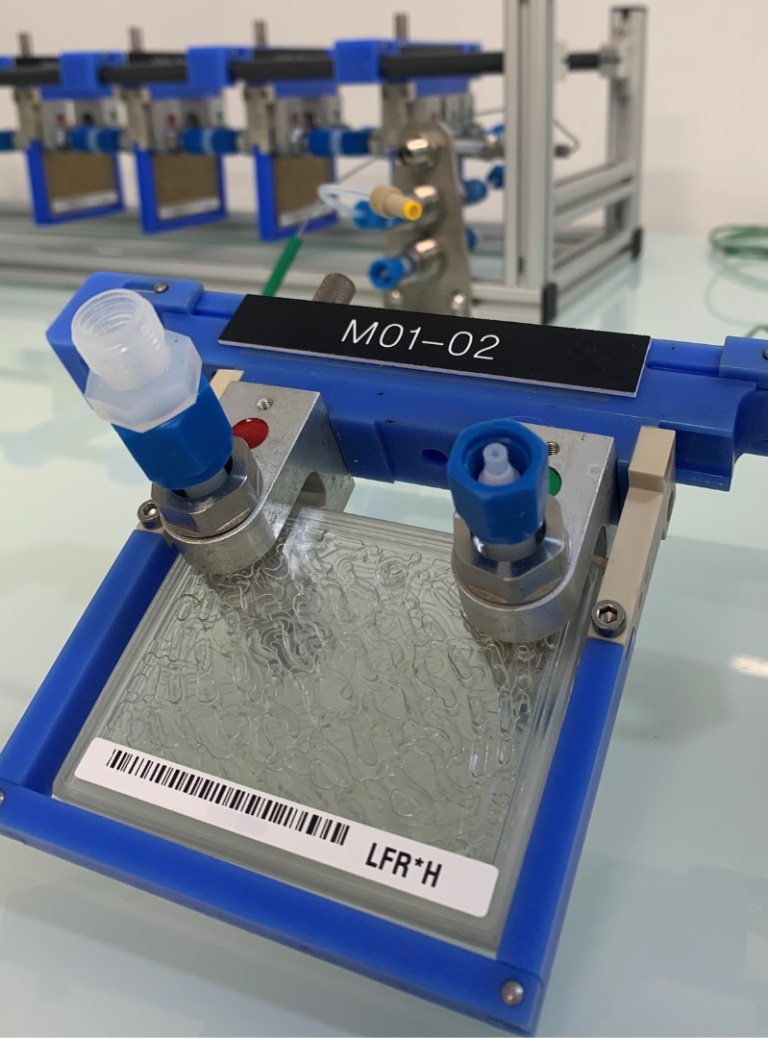

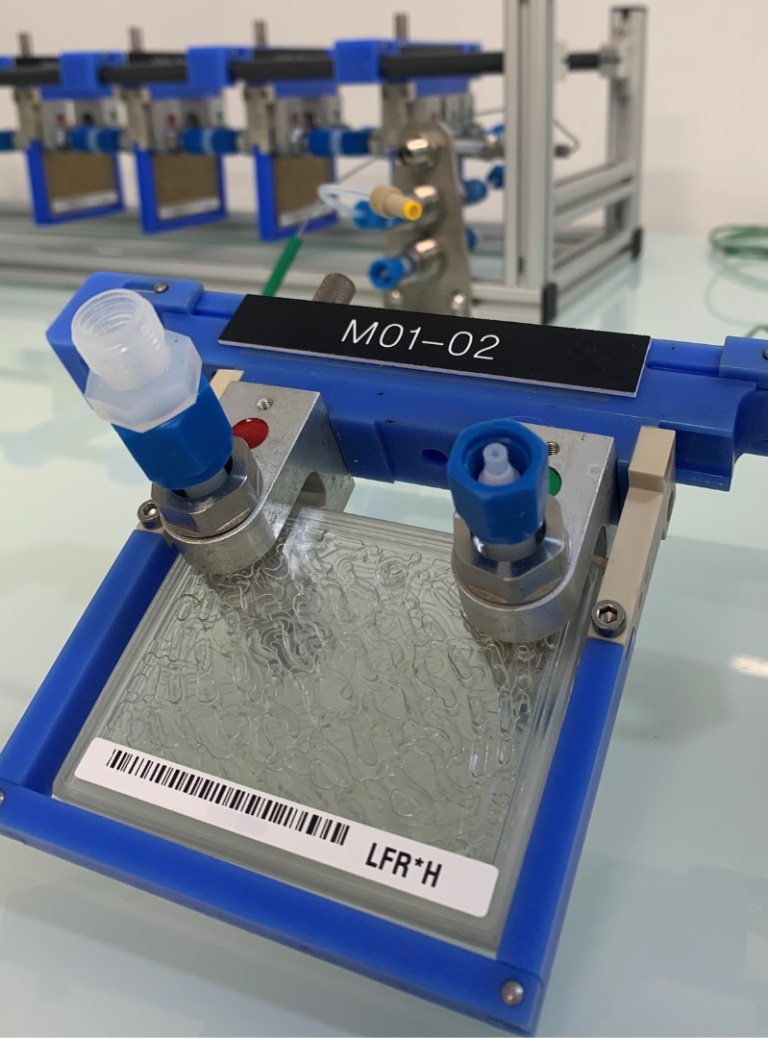

At Processium, we use several digital tools that complement each other at different stages of our modeling projects. The foundation is e-thermo. It provides us with all the necessary thermodynamic data that we then use as input for our models. In a way, e-thermo is our common ground: all our modeling starts from there. Next, we use tools such as Procip, which allows us to choose the most suitable reaction technology based on the customer’s specifications.

Finally, at Processium, we like to keep a pragmatic approach: we always favor the simplest and most robust solution before seeking complexity. That’s why we always start our modeling with commercial tools (Aspen, Prosim). If the modeling doesn’t work on these tools, we add complexity with customized development tools (Matlab, Python, etc.). Besides that, for artificial intelligence developments, we mainly use Python libraries.

Modeling is where two different worlds meet: coding and process skills, and that’s where my team and I are working.

We use modeling in different phases of process development. We can use it to guide the experimental validation to be carried out or to help us define the tests to be performed. It can also help us understand what happened during the tests. If I use my model to predict the results of the test, I can use it to find the information that I may be missing in my process development/design.

A final use case is to scale up a unit operation. This is the main source of use for modeling: to perform the scale-up and minimize the associated risks. We can support each stage of a scale-up with modeling. This saves a huge amount of time and money, while minimizing energy consumption, effluent generation, etc. In environmental terms, it is 100% more environmentally friendly to use modeling than lab testing.

We recently worked on a particularly interesting case study: a client asked us to help them industrialize a highly exothermic polymerization reaction. In this type of process, the temperature rises sharply, which can alter the properties of the polymers produced. The client did not know how to control this phenomenon. Thanks to Procip, we were first able to conduct an initial screening of the various possible reaction technologies. Then, using more detailed modeling, we selected the most suitable technology and proposed a reactor design that they were then able to test internally. The result: the client saved a considerable amount of time on the laboratory testing phase. For us, developing the model and reporting the results took about three months, whereas a conventional approach would have taken much longer.

The main challenge is often the lack of experimental data. When a model cannot be compared with laboratory data, there is always a risk that it will not be fully representative of reality. This is particularly true for new molecules, for which there is still little documentation and for which it is difficult to feed a model correctly without experimental testing. Modeling is therefore not a magic solution that can replace experimentation: it is a complementary approach. It generally only comes into play after the basic data has been acquired, to prevent bad “input” data from leading to bad “output” results. This is where human experience plays a key role in assessing the level of confidence that can be placed in a model. Moreover, the more we practice modeling, the more we become aware of its complexity and uncertainties. It is possible to be sure that the mathematical equations used are correct without being sure that reality is correctly represented (and vice versa).

In 2022, I started a training in artificial intelligence applied to process engineering at the Technical University of Denmark, and in 2024, we began implementing AI in Processium’s digital developments. Thermodynamics is currently the field of process engineering where data is most abundant and exploitable for AI. Databases such as E-thermo already enable us to train our AI models to predict physical properties, and this is a project we are actively developing in-house.

Today, AI can be used at all stages of process development, as well as in industrial control once processes are operational. Process engineering is a unique field. Unlike models such as ChatGPT, which are trained on huge volumes of data, our goal is not to train AI on a massive amount of information, but rather to verify and optimize the process using continuously generated data.

For me, the next big boom in the sector in the coming years will come from online analysis tools capable of performing near-instantaneous analyses. These tools will enable us to obtain an unprecedented volume of data. Once this data is available in real time, we will be able to develop hybrid simulation and modeling tools, combining digital technology and AI in real time. Processium is already fully involved in the development of AI. We are currently conducting proof-of-concept studies on various topics before we can offer new services to our clients and deploy internal tools to assist engineers.

I would say that my job is similar to creating a video game. In a video game, you imagine an entire world, with a main character who must evolve and accomplish various missions. This world is designed by someone, and my job is a bit similar. The factory becomes the world of my own game, the product is the main character, and its missions correspond to the chemical transformations I have to model and simulate to obtain the desired final product. My role is to optimizing the game with the aim of finding out how my character can achieve their goal as quickly as possible.

Are you developing an ambitious and innovative R&D project where digital technology can make a difference?

📩 Contact us!

Choosing the right reactor technology as early as possible is one of the most powerful levers for ensuring the success of a chemical process, particularly...

Read more

What is a solvent? An [extraction] solvent is a fluid used to extract or isolate a target product (the solute) from a mixture. In a...

Read more